Linda Berberich, PhD - Founder and Chief Learning Architect, Linda B. Learning, mastering the piano via her “musical ears,”circa 2019

Hi, I’m Linda. Thanks so much for checking out the March 2026 edition of my Linda Be Learning newsletter. If you are just discovering me, I encourage you to check out my website and my YouTube channel to learn more about the work I do in the field of learning technology and innovation.

As I mentioned in the past two editions, my background in learning is very, very broad, and that in 2026, the newsletter themes will examine technology fashioned after the sensory and/or perception abilities of humans, animals, and sometimes even plants, highlighting how machine learning often is a poor mimic relative to the complexities of how sentient beings learn.

So far, I’ve examined Vision (January) and Gustation (February), and this month, the focus will be on Audition, more commonly referred to as hearing. If you read the January edition, you learned that until I was 43 years old, I had totally garbage eyesight. Like many others who are born with severe sensory differences, this “deficit” resulted in the heightening of my other senses — my hearing, my sense of smell, my sense of taste, but especially my kinesthetic senses (of which there are several, not just one, a point we will revisit in the April edition). Which leads one to question, is it really a deficit, or simply a difference? And if it’s simply a difference, why do we put so much emphasis on “fixing” the individual instead of adapting the environment to be more inclusive?

When it comes to hearing in particular, people who don’t experience deafness assume all deaf people WANT “normal” hearing, without ever considering that deaf culture is alive and well and people within that community have strong opinions about this topic.

If the deaf person happens to be a child, that decision is often made for them, before they ever have the opportunity to make that decision for themselves. And the general public sees this as a good thing.

But if you ask deaf people themselves, you might be surprised by how they answer. For example, consider deaf advocate Marlee Matlin’s perspective.

For me personally, I never saw my poor eyesight as a deficit necessarily, because my other senses picked up the slack. Even though I should have ruined my hearing by now: by falling asleep inside the speaker of my parents cabinet stereo I would hide in to eavesdrop on the partying adults when I was a little kid; through all the music I’ve listened to at way too high volumes; via all those live shows I’ve attended during my lifetime; to my own rock star aspirations working with amps and mics and soundboards.

Yet I’ve always had a keen ear and picked up the piano faster than any other adult my piano teacher had ever taught in her 40+ years of piano instruction — and she complimented me on my “good ears” in her delightful Polish accent every chance she got. I was often the ONLY adult playing in our winter and summer recitals, and even for the first one, I played towards the end, with the teenagers and young adults who had been playing for many years.

But it isn’t just music for me. I am very sensitive to the sound of other people’s voices and can recognize a familiar voice even if several decades have passed since I last spoke with them - a point I revisit later in this edition with my sound model examples. I also pick out and identify accents easily, and can understand what people are saying even through what others might describe as a thick accent. I’ve also come to terms with the fact that the sound of a person’s voice can either attract or repulse me, an idiosycracy I’ve never been able to get over, even if I otherwise have opposing feelings about the person overall.

So in this edition, we are going deep on hearing, the tech, how computers “hear” versus how living organisms hear, and whether we’re doing the right thing by “correcting” hearing differences when there are other, more inclusive approaches we might consider taking instead.

Tech to Get Excited About

I am always discovering and exploring new tech. It’s usually:

recent developments in tech I have worked on in the past,

tech I am actively using myself for projects,

tech I am researching for competitive analysis or other purposes, and/or

my client’s tech.

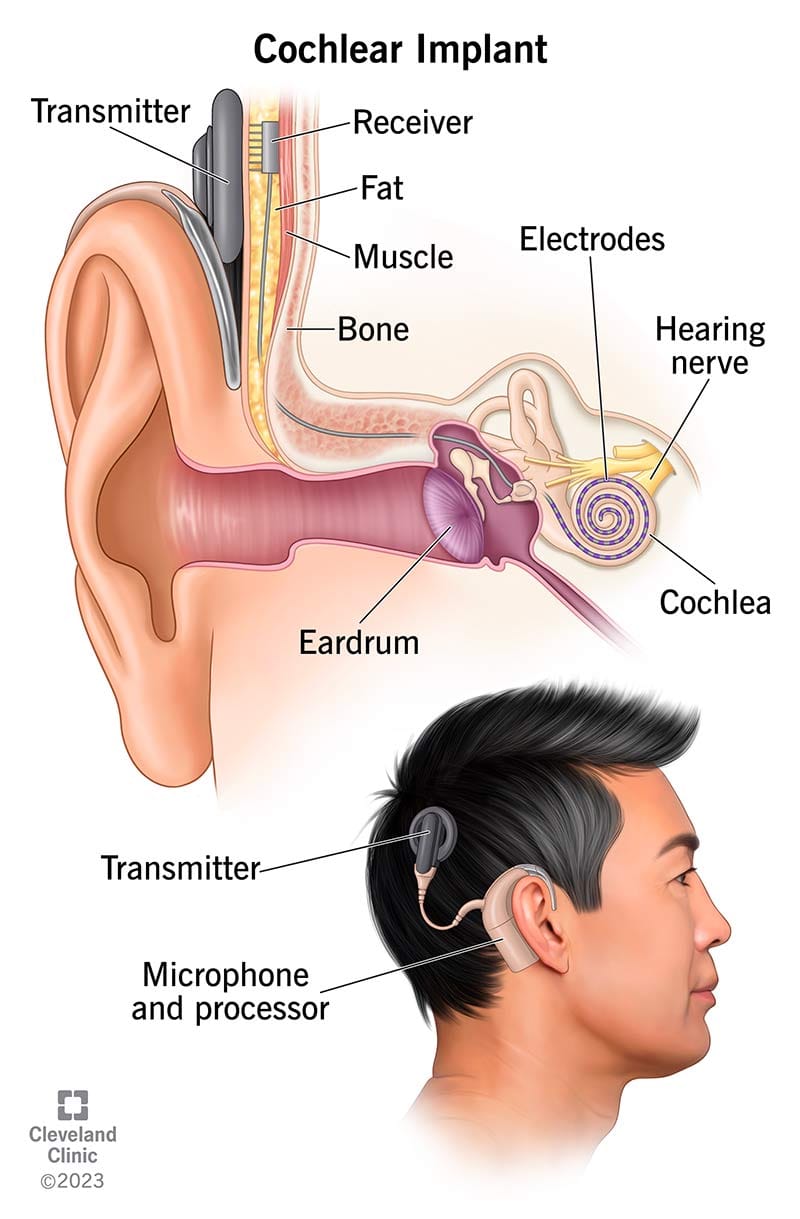

This month, we’re going to begin by looking at the hearing technology addressed in the introductory videos, cochlear implants.

Cochlear Implants

Cochlear implants are electronic devices that help manage hearing loss. Healthcare providers may recommend this treatment if you have moderate, profound or severe hearing loss in one or both ears and you’re not benefiting from hearing aids. Cochlear implant also may be recommended if your hearing aids work, but you still can’t understand speech as well as you’d like. Cochlear implants don’t restore hearing. But they can improve your ability to understand speech and hear other sounds.

Unlike hearing aids, which turn the volume up on sounds, cochlear implants bypass damaged parts of the ear. Most hearing problems happen because of damaged sensory hair cells inside the cochlea (sensorineural hearing loss). The cochlea, deep inside the inner ear, changes sound signals that enter the ear into electrical impulses the brain interprets as sound.

Cochlear implants create new pathways for sounds to reach the brain. An external mic and sound processor capture sound signals coming into the outer ear. The sound processor sends the signals to the transmitter, which is usually attached to the scalp with a magnet, as shown in the diagram below. The transmitter changes the signals into electrical impulses that travel to the receiver, an implant that is attached under the skin, opposite the transmitter. The receiver sends the impulses to the electrodes inside the cochlea, which collect the impulses and send them on to the auditory nerve. The auditory nerve carries those impulses to the brain, where they are perceived as speech, music or other noise.

Cochlear implants create a new hearing pathway in your ear.

Advanced Bionics provides an advanced cochlear implant system to restore hearing or experience sound for the first time. Hearing loss affects millions of people of all ages around the world. For many, hearing aids provide a viable solution, but if you or a loved one experience significant hearing loss, hearing aids may offer little or no benefit. That’s when it’s time to consider cochlear implants, a medical device technology that thousands of people around the world rely on, as a way to restore hearing.

To learn more, visit www.advancedbionics.com.

Technology for Good

Short of cochlear implants, what other assistive technology is available for the hearing impaired? For the moment, the focus seems to be on AI-powered hearing aids using machine learning to analyze sound in real time. But is that the only approach, and is it the best approach, given some of the concerns that Marlee Matlin raised?

Let’s look at some other options.

Sign Language Interpretation Technologies

Consider, for example, the extensive use of sign language by the deaf and hard-of-hearing community, and the emerging technologies in sign language interpretation. These technologies are innovative solutions designed to enhance the communication capabilities for those who use sign language and include software applications, wearable devices, and advanced machine learning algorithms. One prominent technology are smart gloves that can translate hand signs into spoken words. Equipped with sensors, these gloves capture movements and gestures, which are then converted into audio output, effectively bridging the gap between sign language users and non-users.

AI-driven software also has made significant strides in real-time video interpretation. These programs analyze and translate sign language captured via camera almost instantly, allowing for smoother, more natural interactions. We’re also seeing augmented reality (AR) and virtual reality (VR) platforms beginning to play a role in creating immersive, engaging learning environments for teaching and acquiring sign language, which benefits both deaf individuals and those looking to communicate with them effectively.

Emerging technologies in sign language interpretation have the potential to bridge the communication gap between sign language users and non-users, fostering inclusivity, access and equal opportunity. Sign language users can play a crucial role in the development and evaluation of these technologies by participating in user testing and feedback processes. Collaborating with deaf communities and advocacy groups ensures that the tools developers create are not only technologically advanced but also meet the practical needs of users.

By joining these initiatives and providing feedback, sign language users can help shape features, usability aspects, and the real-world application of emerging technologies. Getting involved in advocacy groups focused on accessibility and technology also amplifies users’ voices in larger conversations about accessibility standards and innovation.

Robots Learning Sign Language

Teaching sign language to robots represent another shift in making communication more accessible and unrestricted. If robots can master sign language, deaf individuals will be able to interact with them seamlessly, without needing a human interpreter. This leap in technology holds the promise to reshape accessibility, transforming lives globally.

It’s a promising endeavor, blending robotics, artificial intelligence, and linguistics, to handcraft a future where communication hurdles are lessened. Learn more here.

The Signs Platform

Did you know that American Sign Language (ASL) is the third most prevalent language in the United States, after English and Spanish? Yet very few AI tools are developed with ASL data. So NVIDIA, the American Society for Deaf Children and the creative agency Hello Monday joined forces and created Signs, an interactive web platform built to support ASL learning and the development of accessible AI applications.

Sign language learners access the platform’s validated library of ASL signs to expand their vocabulary with the help of a 3D avatar that demonstrates signs — and use an AI tool that analyzes webcam footage to receive real-time feedback on their signing. Signers of any skill level can contribute by signing specific words to help build a video dataset for ASL.

Bonus video, file under the “just because we can, doesn’t mean we should” category, for those interested in a deeper dive. Actor Marlee Matlin and groundbreaking researchers E.J. Chichilnisky, Jim Hudspeth, and Daniel Kish discuss recent breakthroughs in vision and hearing that may soon render many forms of blindness and deafness reversible.

But not everyone welcomes this future. Deafness is not just a disability; it is a culture with its own language and history. For many in that community, ‘cure’ equates to cultural genocide. With blindness, the issues are different, but just as difficult, something I can attest to, from direct lived experience.

Tech Retrospective: Voice Recognition vs Computer Hearing

You can read about the history of how voice recognition has evolved over time into the technology we know today. And what you’ll find in most accounts is what the technologies were able to do - the outcomes - but very little about HOW this “recognition” takes place. In other words, and beyond just voice recognition, how do computers “hear” and how similar or different is that from how humans and other living organisms hear?

In the January edition of this newsletter, you learned that computer vision is a subfield of machine learning that empowers machines to interpret and make decisions based on visual data input from the world around them. It is literally the automated extraction, analysis, and “understanding” of relevant information from one or more images. Why then is speech and voice recognition not conceptualized as computer hearing and automated in the same way? Because the technology wasn’t modeled after hearing like computer vision was originally conceptualized by early neural networks and frogs’ visual systems.

Basically, the current audio recognition and interpretation models account for computers being dumb and unable to understand or interpret nature’s analog signals. As a result, in current models, sound needs to be converted into a digital signal, the first opportunity for misinterpretation to take place. Sampling is the first step to convert analog signals to digital signals. Sound is recorded using a microphone, and the analog signal is converted to electrical signals at a given sample rate. The higher the sample rate, the better the audio quality.

But our dumb computer still can’t understand this electrical signal even after all this. Bit rate and bit depth still come into play. If you’re not a developer, this can get confusing fast, so check out this brief video that does a good job of explaining it.

If you’d like a more practical understanding how computers hear, check out this article written especially for Python developers, which includes a colab notebook to help you get started on using Gemini Live API for audio conversations.

I started working on some of my own voice models again recently. But I did my original voice rec and voice synth work when I was in grad school in the early 1990s, before DragonTalk even existed.

These examples come from the same model, which I built from a friend’s voice.

This first one was spontaneously generated and meant to disguise the original voice, although it still pretty accurate mimics his voice (excuse the profanity - I would have edited it out, but I thought it was funny).

This second example is a direct replication, indistinguishable from the individual’s actual voice.

I share these last examples to illustrate that these days, it’s not that difficult to create and work with sound models. And there is still much more we could be doing with hearing technology, especially if we dare to think outside the box and reconceptualize the possibilities of the HOW.

Learning Theory: Audition and Hearing

Sensation and perception are subfields within the field and academic study of biological psychology as well as in anatomy and physiology within the field of biology. Let’s review the human hearing sensory system, which, incidentally, also significantly impacts your balance.

Audition, the sense that allows us to perceive and interpret sound, plays a crucial role in our daily lives, from communicating with others to enjoying music and being aware of our environment. Sound originates from vibrations or disturbances in the air, creating waves of air pressure that our ears can detect. These waves can be caused by various sources, from the vibration of a tuning fork to the rustling of leaves in the wind.

Frequency measures the number of cycles per second in a sound wave and determines the pitch of the sound. Human hearing is typically sensitive to frequencies ranging from approximately 20 hertz (Hz) to 20,000 Hz. Different animals have varying audible ranges based on their physiology.

Amplitude refers to the height of a sound wave, representing the change in air pressure. This characteristic is closely related to our perception of loudness. The greater the amplitude, measured in decibels (dB), the louder the sound. However, loudness is not solely determined by amplitude, as frequency also plays a significant role.

As frequency increases the threshold for detecting the sound follows a curved path, first lowering (making it easier to detect the sound) and later increasing (making very high pitch sounds harder to detect). For this reason, it is possible for a tone that is presented at the same amplitude (measured in decibels) to fall below this threshold (going undetected) for very low frequencies, be easily heard for somewhat higher frequencies, and then go unnoticed again for very high frequency sounds.

The audition neural pathway serves to process and transmit auditory information from the external environment to the brain's primary center for sound perception. It is organized in a tonotopic manner, similar to how the basilar membrane in the cochlea is arranged. Different regions of the auditory cortex respond to specific ranges of sound frequencies. High-frequency sounds activate one region, while low-frequency sounds activate another. This tonotopic map allows the brain to process auditory information with spatial precision, just as in vision, where a retinotopic map helps process visual stimuli based on their location in the visual field.

This is not the only way auditory processes share some intriguing similarities with visual perception, and we can encounter ambiguities in both sensory modalities. Like visual illusions such the infamous dress that could appear as black and blue or gold and white, auditory illusions can also confound our senses. One such auditory illusion made waves across the internet: the Laurel-Yanny auditory clip.

In the Laurel-Yanny clip, some individuals hear "Laurel" while others perceive "Yanny." Remarkably, this perception can vary from one person to another and even change depending on the equipment used, such as speakers or headphones. To dissect this illusion, researchers examined the spectrograph of the Laurel-Yanny clip. They found that the high-frequency components of the sound leaned more towards "Yanny," while the low-frequency elements sounded more like "Laurel." By altering the auditory input— either by boosting the high frequencies (or the low frequencies)—researchers could influence how individuals perceived the clip.

Another similarity is that, like human vision, hearing does not work in isolation. The brain integrates sensory data into a seamless experience of reality, a process called multisensory integration, leading to the remarkable phenomena, synesthesia. Synesthesia comes in various forms, such as music-color synesthesia, where musical notes evoke specific colors. This blending of sensory experiences, while unusual, is not considered a disorder but rather a variation in perception. Certain areas of the brain play a pivotal role in this phenomenon, and it appears that this peculiar blending of senses is hereditary, suggesting a genetic component. Individuals with synesthesia may possess specific gene mutations that result in the cross-wiring of sensory regions in the brain. This cross-wiring manifests as a greater propensity for metaphorical thinking and creativity, often found among artists, poets, and novelists.

Given the similarities between auditory and visual sensory processes, we might again revisit the question of why computer vision but not computer hearing. I’d like to drive this point home by very briefly examining how humans perceive language. When we listen to spoken language, we tend to think we are perceiving individual words as distinct units. However, in reality, there aren't always clear pauses or silences between words.

Newborns have the remarkable ability to distinguish between various phonemes (the smallest units of sound in language) from all possible languages. However, as they grow, they start focusing on the specific phonemes relevant to their native language, filtering out those that aren't essential. This filtering process begins with vowels and later extends to consonants.

Language perception is complex, and our brains develop the ability to parse and understand spoken language over time. In essence, what seems like a series of isolated words is actually a continuous stream of sound, seamlessly processed by our brains.

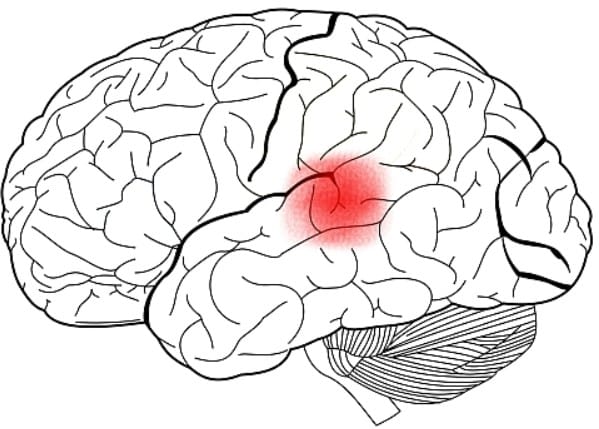

Wernicke's area is a critical region in the human brain that plays a pivotal role in the understanding of spoken language. Located in the posterior part of the left hemisphere, Wernicke's area is an integral component of the broader language processing network.. The primary function of Wernicke's area is to process the auditory information received from the ears and convert it into meaningful linguistic representations. This region is especially important for the comprehension of spoken language, allowing individuals to decipher and make sense of the sounds and words they hear during conversations, lectures, or any form of oral communication.

Diagram showing Wernicke's area.

Now compare this complex neural approach to the rudimentary way sound is digitalized and interpreted. And when you consider how Natural Language Processing (NLP) works, it becomes even more apparent how computers and algorithms miss out on the subjective sensory experience that is sound, a point we will revisit in the July edition when we focus on speaking technologies.

Science can describe receptors, neural pathways, and brain regions, but it cannot fully capture the subjective wonder of auditory sensation. The sound of your mother’s voice, that song that always takes you back to a particular point in time, the melody that becomes an earworm —these are more than biology. They are moments where science and soul meet, where the mechanics of perception transform into meaning.

The science of senses teaches us not only how we see and perceive but also how precious perception is and how it differs from the mechanical world of sound processing and voice recognition. Every soundwave is a miracle shaped by evolution and refined by the mind. In understanding our senses, we glimpse the extraordinary reality that, through them, the universe knows itself.

How Other Animals Hear

Do humans hear better than animals, or is it the other way around? The answer to this question is a bit more complicated than you may think.

Humans and many other animals can hear a wide range of sounds. We can hear low and high notes and both quiet and loud sounds. We are also very good at telling the difference between sounds that are similar, like the speech sounds “argh” and “ah,” and picking apart sounds that are mixed together, like when an orchestra is playing. Turns out, the inner ear determines much of our hearing abilities. Many other mammals can hear very high notes that we cannot, and some can hear quiet sounds that we cannot. However, humans may be better than any other species at distinguishing similar sounds. We know this because, milliseconds after the sounds around us go into our ears, other sounds come out: sounds that are actually produced by those same ears.

However, keep in mind that although our hearing abilities are pretty impressive, enabling us to interpret the world, we fall way behind some other creatures with far superior auditory skills.

How Plants Hear

Before we consider the questions of if or how plants hear, have you ever thought about listening to or hearing your plant? This cool tech allows you to do just that!

Another common belief is that plants grow better if you talk to them or play music for them. A couple of shorts of YouTube disputed this belief, but they also used very short comparison cycles. When in doubt, consult the Myth Busters.

Plants may or may not dance to the beats of your favorite song, but if they hear the munching sound of a caterpillar, that’s another story. On sensing the approach of a predator, some plants flood their leaves with chemical defenses that are specifically designed to ward off attackers. For example, Thale Cress (Arabidopsis thaliana) produces a large amount of mustard oil in its leaves, and when the unknowing caterpillar consumes too much mustard oil, it succumbs to the poison and dies.

Some researchers have begun to apply rigorous standards to study plant hearing with experiments on plants like corn. Their preliminary results indicate that corn roots grow towards specific frequencies of vibrations. What’s even more surprising is the finding that roots themselves may also be emitting sound waves. So while there isn’t consensus on how a plant might produce sound signals, what we do know is that they can detect these signals and take action, depending on whether it is a friend or a foe.

Upcoming Learning Offerings

Completely unrelated to sound, apart from the fact that music selection is a big part of my group fitness experiences…

As I have discussed in the April 2025 edition of this newsletter, in addition to the work I do with Linda B Learning, I also have an extensive fitness and athletic training background. I wanted to build YouTube for fitness training way back in 2001, because I recognized early on how much a visual medium like online video would impact people’s ability to make connections, workout with each other and share knowledge with other people all over the world.

But YouTube came along not too far afterwards, and in 2009 I created my Fit Mind-Body Conditioning channel. This year I have revived that work and am offering two live classes each month, broadcast live on YouTube.

On the Full Moon, we do yang energy workouts. So far, we’ve done a step and strength workout, an upper body/core strength training workout, and a gliding workout. During the New Moon, the focus is on yin energy, so yoga and other mind-body practices are featured during those lives.

Be sure to subscribe to the channel and click notifications to be notified when we go live, or when other new channel content drops. More coming from Fit Mind-Body Conditioning as I move towards a more formal relaunch of the brand, so stay tuned!

That’s all for now. I hope you have been “hearing me” about the difference between how humans and other sentient beings sense and perceive the world and how computers and algorithms poorly mimic it. I also hope you considered that those of us who have experienced significant sensory differences don’t necessarily see them as deficits — believe me, I was a lot happier when I couldn’t see the way other people often look at me. And if we’re building tech without including the people who we are supposed to be serving with what we’re building, what are we even doing?

Next month, we’ll dig in to how you really “feel” about it!

Until then…

I know you aren’t, Martin!